AI Implementation Planning: The Ultimate Guide for Business 2026

AI projects often stall after early success, leaving teams stuck between ideas and real results. Without clear AI implementation planning, even strong use cases fail to scale. In this guide, MOR Software will break down a practical AI implementation strategy to help you turn experiments into measurable business outcomes.

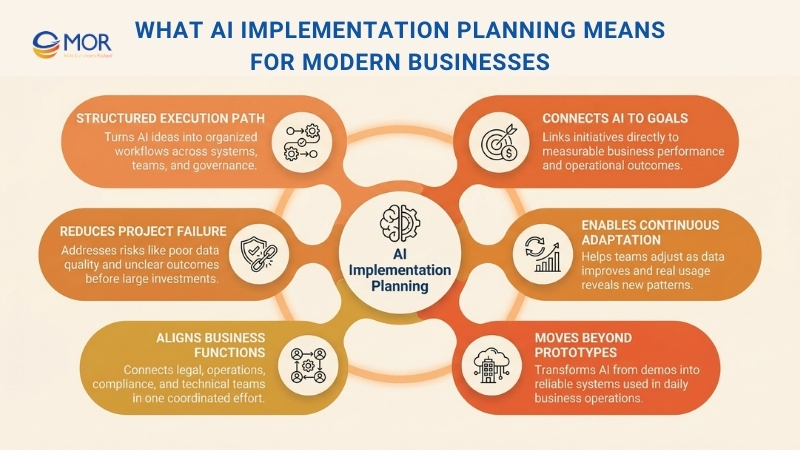

What AI Implementation Planning Means For Modern Businesses

AI implementation planning converts AI ideas into organized execution across the business. It shows how projects move from early concepts to real use across data, systems, teams, and governance. Instead of depending on scattered decisions or isolated effort, this approach creates a clear path that allows implementing AI in business in a structured way.

According to Gartner, at least 30% of generative AI initiatives will be dropped after the proof of concept stage by the end of 2025. The main causes include low data quality, weak risk management, rising expenses, and unclear business outcomes. This shows why proper planning must happen before companies commit large budgets.

Many companies misunderstand what planning for AI deployment really includes. Some treat it as a high-level strategy, which sets direction but lacks the practical steps needed for rollout. Others view it as a data science task, focusing only on building models while ignoring approvals, integration, security, and user adoption. Some even see it as a simple tech rollout, even though the implementation of AI in business often involves legal teams, compliance checks, operations, and external partners.

A well-defined plan links AI initiatives directly to business goals, aligns teams across departments, and prepares the organization for change. Data improves over time, assumptions get challenged, and users interact with systems in ways teams did not expect. This structured approach helps teams adjust without losing focus.

The difference is clear. Without proper planning, companies create impressive demos. With it, AI automation becomes part of daily operations and real business performance.

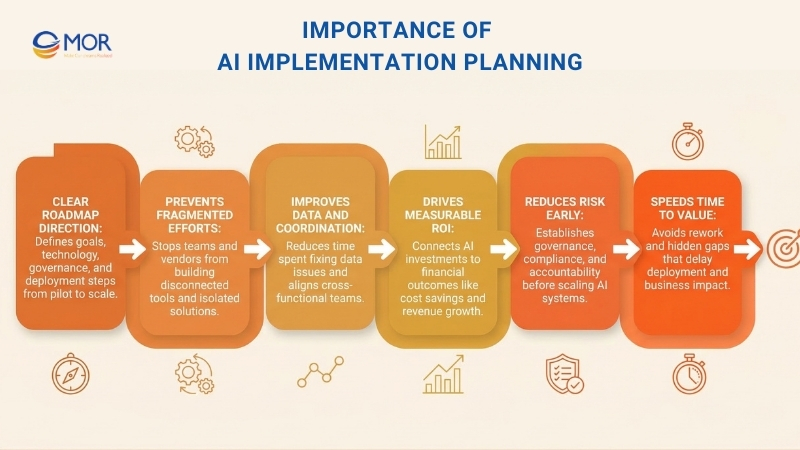

Importance Of AI Implementation Planning

Most enterprises already accept that AI can create value. Yet belief on its own does not lead to results. What separates companies that test ideas from those that scale them and see clear returns is a well-structured AI implementation planning approach built with intent.

A clear roadmap helps teams stay aligned with business goals, defines what technology is required, sets up governance early, and outlines a step-by-step path from pilot to full deployment. This type of strategic AI implementation planning keeps everyone moving in the same direction.

Without this kind of structure, AI efforts often break apart. Different business units follow their own ideas, data teams spend time fixing ongoing quality issues, and vendors come and go with isolated tools that never connect into a single system.

Only about a third of enterprises report measurable financial returns from generative AI integration services, even though adoption is widespread. Research from PwC shows that the main reason is the absence of strong AI strategy development guiding these investments across the organization.

A well-defined enterprise roadmap prevents this drift. It brings clarity to what should be built, why it matters, how it fits into current systems, who is responsible, and how it will scale. It also lowers risk through

- Setting up governance and compliance rules early

- Improving coordination across teams

- Speeding up time to value by avoiding rework, duplicate tasks, and hidden technical gaps

Most importantly, a roadmap shifts the conversation away from tools and toward business results. It connects AI initiatives directly to financial outcomes, whether that means lowering costs, improving forecasts, automating decisions, or delivering better customer experiences.

>>> Explore more topics about AI on MOR Blog today!

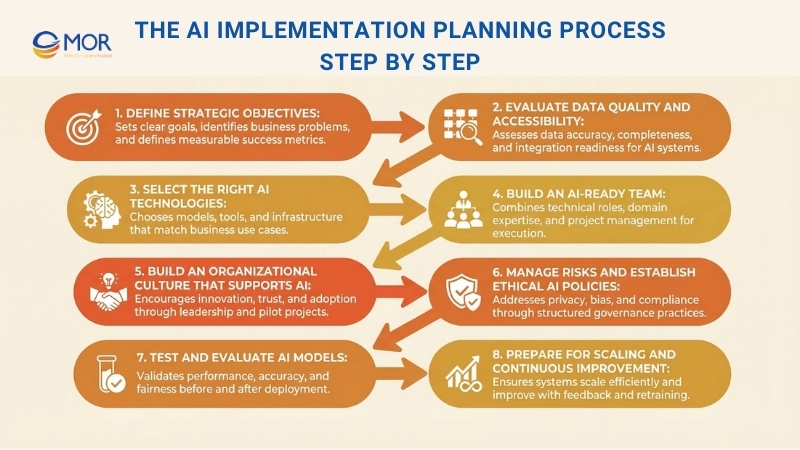

The AI Implementation Planning Process Step By Step

A structured AI implementation planning approach helps you move from ideas to real deployment with fewer risks. This AI implementation process gives teams a clear path to follow, from early planning to long-term operation, and works as a practical AI implementation plan for execution.

Step 1: Define Strategic Objectives

Setting clear goals is the starting point for any successful AI initiative. You need to define the problems or opportunities that digital transformation can address. This requires a careful review of business processes and objectives, asking questions such as: What inefficiencies should be fixed? How can generative AI improve customer experience? Are there decision workflows that can be improved with automation? These goals must be specific and measurable so you can track results and evaluate outcomes effectively. Looking at case studies from other companies can help you understand what may work in your situation, often guided by an AI business strategist.

Once problems are identified, you can convert them into clear objectives. These may include increasing operational efficiency by a defined percentage, improving response time in customer service, or raising the accuracy of sales predictions. Setting success metrics such as accuracy, speed, cost savings, or customer satisfaction gives your team clear targets and helps control scope. This also shapes a structured project plan for AI implementation, making sure the AI development services stay aligned with business goals and delivers measurable results.

Step 2: Evaluate Data Quality And Accessibility

Since AI results depend on the data it uses, reviewing data quality and accessibility is a key early step in AI implementation planning. AI systems learn patterns from data, and even the most advanced machine learning algorithms cannot perform well if the data is flawed. You should assess data based on accuracy, completeness, consistency, and how relevant it is to the business problem. Reliable data sources lead to better insights, while poor-quality data creates bias and incorrect predictions. This step often includes cleaning data, fixing errors, filling missing values, and keeping datasets updated. Data must also reflect real-world conditions so the model can perform well in practice.

AI systems also need proper access to data. This means data should be stored in structured formats that machines can read and must follow privacy laws and security standards, especially when sensitive information is involved. Accessibility also depends on how well data from different systems can work together, since departments often store data in different formats. You may need to standardize or integrate these sources. Setting up clear data pipelines and storage systems allows data to move smoothly into the multimodal AI model, supporting stable deployment and future scaling.

Step 3: Select The Right AI Tech Stack

The technology you choose must match the tasks your AI system will handle, whether that includes predictive modeling, natural language processing (NLP), or computer vision. You need to decide which model architecture and method best fit your overall AI approach. For example, machine learning methods like supervised learning work well when labeled data is available, while unsupervised learning is better for clustering or detecting unusual patterns. If your goal is language understanding, a language model may be the right fit, while vision-based tasks often rely on deep learning models such as convolutional neural networks (CNNs). Selecting the right tools for the task leads to better performance and smoother artificial intelligence implementation.

Beyond choosing models, you also need to think about the platforms and infrastructure that will support your system. Cloud providers give flexible options for processing and storage, which is useful if your organization does not have strong on-site resources. Open-source tools like Scikit-Learn and Keras also provide ready-made algorithms and model structures, helping teams reduce development time and move faster toward deployment.

Step 4: Build An AI-Ready Team

A capable team can manage the challenges of AI development, deployment, and ongoing support. You need a mix of roles, including data scientists, machine learning engineers, and software developers, each contributing their own expertise. Data scientists focus on analyzing patterns in data, building algorithms, and refining models. Machine learning engineers connect data science work with engineering systems, handling model training, deployment, and performance tuning. It also helps to include domain experts who understand your business needs and can interpret outputs so results stay practical and aligned with goals.

Technical skills alone are not enough. A strong team also needs support roles to keep work on track. Project managers with AI experience can organize workflows, define timelines, and monitor progress to make sure key milestones are reached. Specialists in ethics or compliance help confirm that systems follow data privacy laws and internal guidelines. Training existing staff, especially those in data or IT roles, is often a cost-effective way to strengthen the team. This approach lets you use internal knowledge while building a culture of ongoing learning. A well-prepared team not only supports the current rollout but also builds the foundation for future AI growth and change.

>>> Will AI Replace Software Developers or Just Redefine Their Work?

Step 5: Build An Organizational Culture That Supports AI

Creating a culture that supports innovation helps employees accept change, test new ideas, and take part in AI adoption. This starts with leadership that encourages openness, creativity, and curiosity, pushing teams to think about how AI can add value and improve daily operations. Leaders should share a clear vision for AI, explain its benefits, and address common concerns to build trust. This kind of environment also reflects best practices for adopting AI in organizations, especially as companies explore new approaches like implementing agentic AI in planning processes. Strong AI implementation planning supports this shift by giving teams clear direction while they adapt.

Running pilot projects gives teams a chance to test small AI use cases before full rollout. This creates a low-risk way to evaluate capabilities, gather insights, and adjust the approach when needed. When companies support innovation in this way, they improve the success of individual AI projects and build a workforce that can adapt and grow with future AI initiatives.

Step 6: Manage Risks And Establish Ethical AI Policies

AI systems, especially those handling sensitive data, come with risks tied to data privacy, bias in models, security gaps, and unintended outcomes. To manage these risks, organizations should carry out detailed assessments during each stage of development, identifying where predictions may fail, create unfair outcomes, or expose data. Strong data protection practices, including anonymization, encryption, and access control, help safeguard information. Regular testing and monitoring in real environments also help detect unexpected behavior or bias, allowing teams to refine models and improve fairness over time within a structured AI agent framework.

Setting clear ethical guidelines alongside risk management practices ensures that AI use follows both legal standards and company values. These guidelines should cover fairness, accountability, transparency, and respect for user choice. A cross-functional AI ethics committee or review group can oversee projects, review potential social risks, and confirm compliance with regulations like GDPR or CCPA. Embedding these principles helps reduce legal and reputational risk while strengthening trust with customers and stakeholders.

Step 7: Test And Evaluate AI Models

Testing and evaluating models is necessary to confirm that they are accurate, reliable, and ready to deliver value in real-world use. Before deployment, models should go through strict testing using separate validation and test datasets to measure performance. This helps you see if the model can generalize well and handle new data correctly. Common metrics such as accuracy, precision, recall, and F1 score are used to assess results, depending on the model’s purpose. Testing should also check for bias or systematic errors that could lead to unintended outcomes, such as unfair results in decision-making models. Careful evaluation of these metrics helps teams confirm that the system is ready for release as part of a solid AI implementation planning process.

Beyond initial testing, continuous evaluation is needed to maintain performance over time. Real-world conditions change, including data patterns and business needs, which can affect how the model performs. Ongoing monitoring and feedback loops help track performance, detect data drift, and trigger retraining when needed. Automated alerts and performance dashboards make it easier to spot issues early and respond quickly. Regular retraining keeps the AI system aligned with current conditions, maintaining accuracy and usefulness as new patterns appear. This mix of detailed testing and continuous evaluation keeps the AI solution stable and adaptable over time.

Step 8: Prepare For Scaling And Continuous Improvement

Scalability is a key factor in any successful AI rollout, as it allows systems to handle increasing amounts of data, users, or processes without losing performance. When planning for growth, you should select infrastructure and frameworks that support expansion, whether through cloud services, distributed systems, or modular design. Cloud platforms often work well for scaling AI, since they provide flexible resources and tools to manage higher workloads. This flexibility makes it easier to add new data, users, or capabilities over time as business needs change. A scalable setup helps you get more long-term value from your system and lowers the chance of expensive adjustments later, as seen in many real-world AI implementation plan example cases.

The AI system should stay accurate, useful, and aligned with changing conditions over time. This requires retraining models regularly with updated data to prevent performance decline, along with monitoring results to catch bias or errors early. Feedback from users and stakeholders should also be used to refine the system based on real usage. Continuous improvement may include updating algorithms, adding new capabilities, or adjusting model parameters to match new business needs. This approach keeps the system reliable and effective while building long-term trust across the organization.

As organizations of all sizes aim to improve workflows and extract more value from their data using AI tools, it is important to keep goals aligned with business priorities. AI should support those priorities, not exist as a standalone experiment. It is easy to follow trends, especially with new tools appearing frequently. Still, real success comes from a clear approach that focuses on meaningful outcomes and fits the specific needs of your organization.

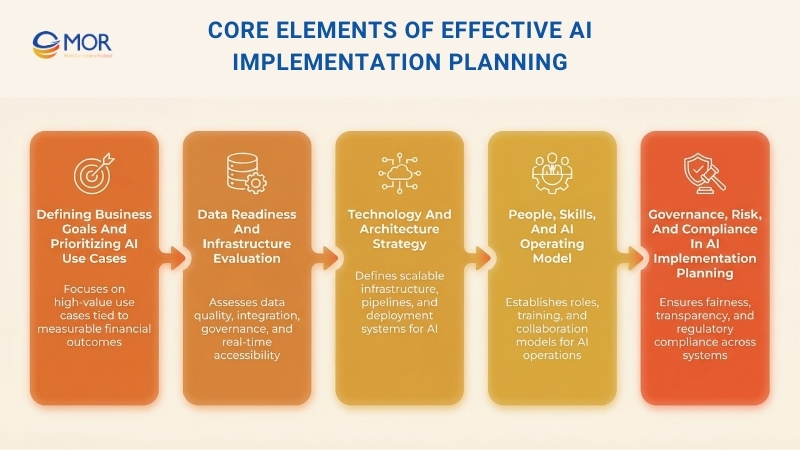

Core Elements Of Effective AI Implementation Planning

Every organization follows a different path with AI. Still, the foundation of a mature roadmap stays consistent. These elements decide whether AI remains a set of isolated experiments or grows into a scalable business capability through strong AI implementation planning.

Defining Business Goals And Prioritizing AI Use Cases

Every roadmap starts with a simple but important question. Which business outcomes should AI improve?

The most effective programs connect use cases directly to measurable P&L drivers such as lower operating costs, better forecast accuracy, reduced churn, faster cycle times, and higher revenue per customer. This kind of alignment is a key part of successful AI implementation planning.

This stage often involves structured workshops to map AI opportunities and identify high-value use cases. These decisions are based on feasibility, data readiness, and clear business impact. Instead of chasing trends, the focus stays on use cases that bring steady operational or financial value.

Data Readiness And Infrastructure Evaluation

No AI roadmap works without strong data foundations. This step reviews the current state of enterprise data across different levels, starting from Level 0, which represents siloed or unavailable data, up to Level 4, where data is real-time, governed, and ready for production use.

A structured Data Readiness Scorecard is often used to assess:

- Data availability and completeness

- Quality, lineage, and consistency

- Integration across ERP, manufacturing CRM, product, and operational systems

- Governance and access control

- Real-time or batch access

- Security and compliance readiness

Around 70% of AI failures come from unresolved data issues, based on Turning Data into Wisdom research. This makes data readiness the most important phase of any AI roadmap. A well-defined plan helps identify these gaps early, so teams do not build solutions on weak or incomplete data foundations.

Technology And Architecture Strategy

This stage defines the full technical setup needed to support scalable AI. It includes cloud environments, data pipelines, security layers, MLOps tools, monitoring systems, model registries, and clear deployment paths. A strong setup is a key part of any effective AI implementation planning effort.

Many enterprises focus too much on models and not enough on architecture. Gartner reports that more than 50% of enterprise AI initiatives will not reach production by 2027 because core architecture is missing. A well-defined roadmap outlines the entire stack and helps avoid “tech sprawl”, where teams collect disconnected tools without a clear plan.

People, Skills, And AI Operating Model

AI transformation is not only about technology. It also changes how teams work and how decisions are made. A mature roadmap defines the operating model needed to support AI in production. This includes clear ownership of models, responsibility for pipelines, collaboration between teams, and updates to roles where needed.

This may include:

- Setting up an AI Center of Excellence (CoE)

- Redefining roles across data engineering, ML engineering, and business teams

- Training teams in prompt engineering, model monitoring, and data stewardship

- Identifying skill gaps that require hiring or external support

According to PwC’s 2026 survey, 38% of respondents said skill gaps were one of the top three barriers to scaling AI agents, ranking higher than both funding and tools.

Governance, Risk, And Compliance In AI Implementation Planning

As AI systems begin to take part in decision-making, governance is no longer optional. Organizations must handle fairness, transparency, data privacy, security, and model risk, especially in industries with strict regulations. These concerns should be addressed early within a structured AI implementation planning approach.

A strong roadmap defines governance practices such as:

- Data access control and clear audit trails

- Model explainability and fairness checks using tools like SHAP, LIME, or counterfactual methods

- Continuous bias monitoring

- Drift detection and incident response processes

- Policy frameworks aligned with regulations such as GDPR, HIPAA, and emerging AI laws

Companies without mature AI governance face higher exposure to compliance issues and reputational damage. Embedding governance early in the roadmap helps ensure that systems meet requirements from the start and remain compliant throughout their lifecycle.

Read more>> Benefits and Risks of AI

Why These AI Implementation Planning Components Matter

Each part of a roadmap plays a role in turning AI from isolated efforts into a stable business capability. Without strong AI implementation planning, common issues can quickly slow progress and reduce value.

Challenge Without a Roadmap | Impact | How Core Components Solve It |

Siloed data | Low accuracy, unreliable models | Data readiness and pipelines |

Tool overload | High costs, lack of integration | Technology and architecture strategy |

Weak governance | Bias, compliance risks | Governance, risk, and compliance frameworks |

Undefined ownership | Slow delivery, unclear accountability | People and operating model |

Poor use-case selection | No measurable return | Business-aligned prioritization |

Common AI Implementation Planning Mistakes Enterprises Should Avoid

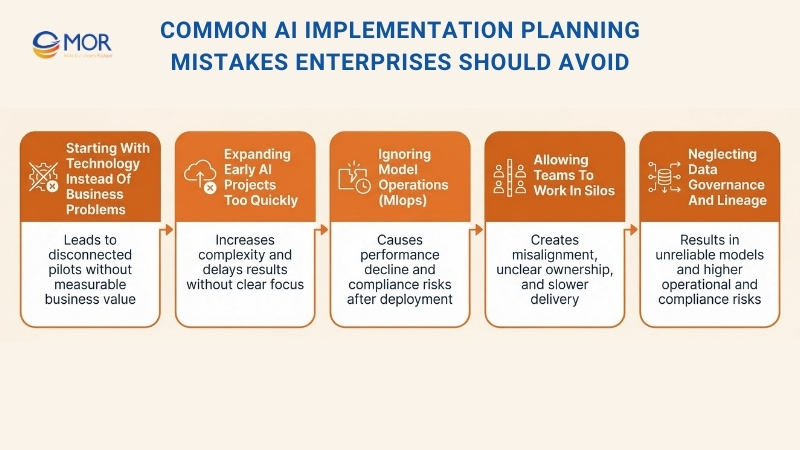

Even as AI adoption grows, many enterprises still struggle to move beyond early pilots. The issue is rarely a lack of ambition. More often, it comes from structural mistakes made during AI implementation planning. These issues slow progress, raise costs, and reduce confidence among leadership teams.

Understanding these problems is key if you want a roadmap that delivers real results.

Starting With Technology Instead Of Business Problems

Many organizations begin with tools, vendors, or model building before defining the business outcomes AI should support. Very few connect AI initiatives to P&L-level metrics, which explains why scaling often fails. Without alignment to business goals, AI efforts turn into scattered pilots with little connection to each other.

Expanding Early AI Projects Too Quickly

Another common mistake is taking on too much too soon. Companies often try to roll out AI across several systems or departments at once, which increases complexity and delays results. Projects lose momentum because teams attempt to do everything at once instead of focusing on a few high-value use cases first.

Ignoring Model Operations (MLOps)

Many enterprises invest heavily in building models but ignore what happens after deployment. Without proper processes for monitoring, retraining, and managing models, performance drops over time or systems fall out of compliance. This risk grows when MLOps pipelines are missing. In regulated industries, where explainability and auditability are required, this becomes a serious issue within any AI implementation planning effort.

Allowing Teams To Work In Silos

Siloed execution often breaks AI roadmaps. Data teams, business units, and compliance groups may work separately, which leads to misaligned goals and unclear ownership. This lack of coordination slows delivery and creates confusion across teams.

Neglecting Data Governance And Lineage

Many organizations also underestimate the importance of data contracts, lineage, and governance. AI systems rely on clean, consistent, and traceable data, yet clear standards are often missing. Without strong governance, even well-designed AI solutions can become unreliable or expose the business to risk.

>>> Finding the right custom AI development company in 2026 is no longer simple. Many businesses struggle with unclear costs, weak delivery, or AI projects that never reach production. Let's explore right now!

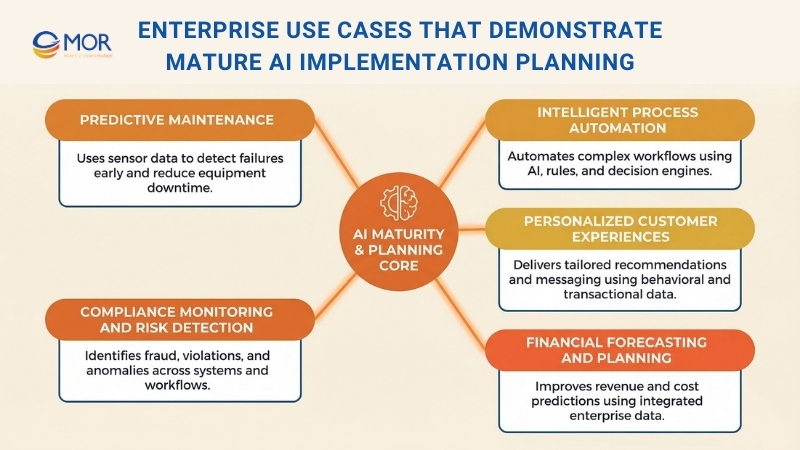

Enterprise Use Cases That Demonstrate Mature AI Implementation Planning

Mature roadmaps focus on use cases that connect multiple systems, support core workflows, and scale across departments. Strong AI implementation planning helps organizations move from isolated experiments to solutions that deliver value across the business. Below are examples of initiatives companies often launch once they reach a higher level of AI readiness.

Case studies across industries

- Healthcare: AI in Healthcare, AI Solutions for Healthcare, AI Tools in Healthcare, Conversational AI in Healthcare

- Finance: AI in Financial Services, AI in Wealth Management, Generative AI in Banking.

- HR & Recruiting: AI in HR, AI in Recruiting Automation, Gamification in Recruitment, Best AI Tools for HR.

- E-commerce & Sales: AI in Ecommerce, AI Tools for Ecommerce, AI in Sales.

- Manufacturing & Project Mgt: AI in Manufacturing, AI in Project Management.

Predictive Maintenance

Predictive maintenance models use IoT sensor data, machine telemetry, and past failure patterns to detect issues before equipment breaks down. This allows teams to plan repairs early, reduce downtime, and extend the life of assets.

These models are often applied in environments with distributed equipment or large fleets. Telemetry data is combined with cloud-based machine learning pipelines to provide real-time monitoring and automated alerts.

Intelligent Process Automation

Enterprises apply AI to automate workflows that involve complex decisions, such as credit checks, claims handling, demand forecasting, or quality control. These systems combine reasoning, anomaly detection, and rule-based logic to support operations.

This type of solution often brings together RPA, AI and machine learning development services, and decision engines into a single workflow. It is widely used in finance and supply chain teams where accuracy, audit trails, and real-time insights are required, making it a core part of scalable AI implementation planning.

Personalized Customer Experiences

Advanced personalization models use behavioral, transactional, and contextual data to deliver tailored recommendations, custom messaging, churn predictions, and next-best-action suggestions.

MOR Software has developed AI-powered personalization engines for SaaS and e-commerce clients. These systems support real-time segmentation and deliver dynamic content across digital channels.

Financial Forecasting And Planning

AI models combine historical usage data, macroeconomic signals, sales pipelines, and operational inputs to improve forecasts for revenue, costs, demand, and cash flow.

MOR Software builds forecasting solutions for mid-market and enterprise finance teams. These systems connect ERP and CRM data with fully autonomous AI agent that assist with planning, budgeting, and variance analysis.

Compliance Monitoring And Risk Detection

Compliance-focused AI tracks transactions, system logs, user activity, and workflows to detect violations, fraud, policy breaches, or unusual behavior. This type of solution is often part of enterprise-level AI implementation planning, especially in regulated industries.

As regulatory pressure increases, automation built with governance in mind is becoming necessary rather than optional.

Enterprise AI Use Case Fit Matrix

Different use cases require different levels of data readiness, integration, and investment. A clear mapping helps organizations choose the right starting point based on their current capabilities.

Use Case Type | Requires High Data Maturity? | Cross-System Integration? | ROI Potential | Typical RTS Labs Fit |

Predictive Maintenance | Yes | Yes | High | Manufacturing, logistics |

Intelligent Automation | Medium–High | Medium | High | Finance, supply chain |

Personalization Engines | High | Medium–High | High | Retail, SaaS |

AI Forecasting | Medium–High | High | Very High | FP&A, operations |

Compliance Automation | High | High | High | Regulated industries |

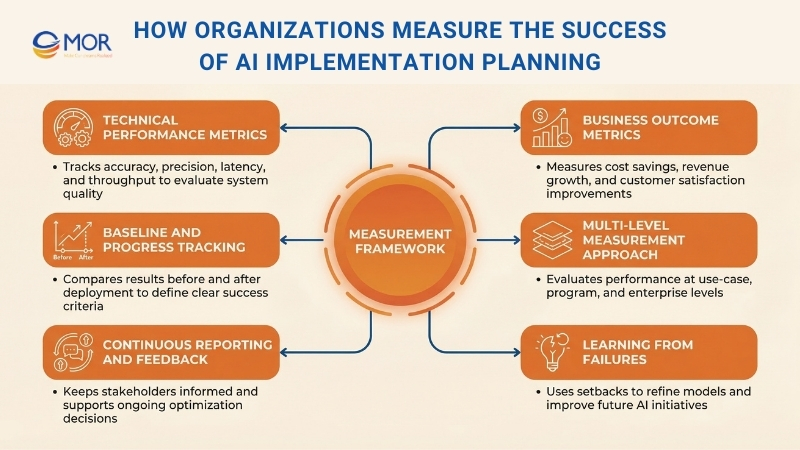

How Organizations Measure The Success Of AI Implementation Planning

Measuring success in enterprise AI platform requires clear frameworks that track both technical performance and real business value. Strong AI implementation planning depends on this balance. Technical metrics show how well systems perform, including model accuracy for prediction quality, precision and recall for different types of errors, latency for response speed, and throughput for processing volume. Yet strong technical results alone do not justify investment. You also need metrics that connect AI outcomes directly to business goals.

Business metrics should reflect the exact objectives AI is meant to improve. If the goal is to lower operational costs, you should compare actual savings before and after deployment. If the focus is customer experience, you can track satisfaction scores and retention rates. If revenue growth is the target, measure new revenue or higher conversion rates linked to AI systems. Setting baselines before deployment allows you to measure progress clearly and define success criteria that guide decisions. Both short-term wins and long-term value need to be tracked to get a full picture.

Measurement should happen across several levels to give a complete view of value. Metrics at the use-case level show how individual solutions perform. Program-level metrics evaluate groups of related initiatives. Enterprise-level metrics track how AI changes the business as a whole. Regular reporting to executives and stakeholders keeps progress visible, builds confidence for further investment, and allows teams to adjust when results fall short. Companies should also be open about challenges and failures, using them as learning points rather than reasons to stop. Research from MIT Sloan Review shows that consistent measurement is what separates organizations that gain strong value from AI from those that invest without clear results.

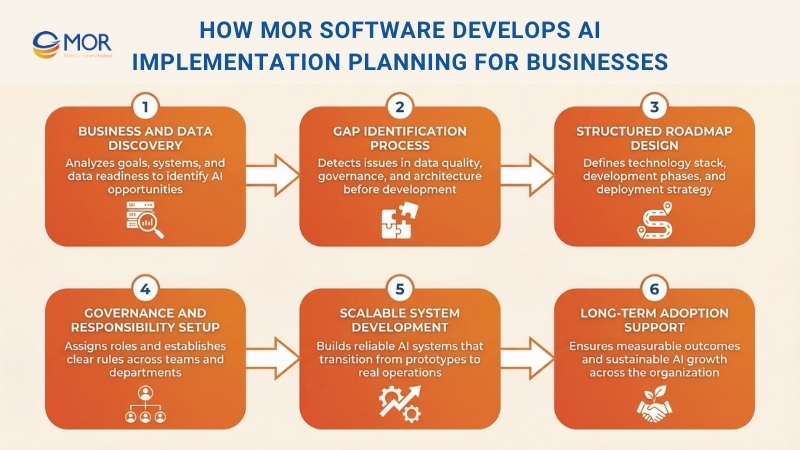

How MOR Software Develops AI Implementation Planning for Businesses

At MOR Software, we support organizations in turning AI ideas into structured execution. Our AI implementation planning approach begins with a deep understanding of your business goals, current systems, and operational challenges. We work closely with your stakeholders to find where AI outsourcing can deliver measurable value, rather than creating isolated experiments.

Our team starts with business and data discovery. We review available data sources, system connections, and infrastructure readiness. This step helps uncover gaps in data quality, governance, or architecture before development begins.

Next, we build a clear roadmap for your AI adoption plan. Our offshore AI developers define the technology stack, development stages, and deployment approach. We also set governance guidelines and assign responsibilities across both technical teams and business units.

Throughout the process, we focus on creating systems that can scale and perform reliably. This approach helps you move from early prototypes to real operational custom AI solutions. With structured planning, defined milestones, and measurable outcomes, we support businesses in adopting AI with confidence and long-term stability. Contact MOR Software today to start building your AI implementation roadmap.

Conclusion

AI success does not come from tools alone. It comes from clear direction, strong execution, and consistent follow-through. With the right AI implementation planning, you can move from small experiments to real business results that scale. If you are ready to build a practical AI roadmap that delivers value, MOR Software is here to help. Reach out to our team to start your AI journey with confidence.

MOR SOFTWARE

Frequently Asked Questions (FAQs)

What is AI implementation planning?

AI implementation planning is the process of preparing an organization to deploy AI systems in real operations. It involves defining business goals, evaluating data readiness, selecting technologies, and establishing governance before development begins.

Why is AI implementation planning important for businesses?

Without proper planning, many AI projects fail to move beyond pilot stages. A structured plan helps align AI initiatives with business objectives, manage risks, and ensure systems deliver measurable value.

What are the key steps in AI implementation planning?

Most organizations follow several stages, including defining business objectives, assessing data quality, selecting suitable AI technologies, building skilled teams, managing risks, testing models, and preparing for scalability.

How long does AI implementation strategy usually take?

The timeline depends on the organization’s size, data maturity, and project complexity. Initial planning can take a few weeks to a few months before development and deployment begin.

What challenges do companies face during AI implementation planning?

Common challenges include poor data quality, unclear business goals, lack of skilled AI professionals, integration difficulties with existing systems, and governance or compliance concerns.

What role does data play in AI rollout strategy?

Data is the foundation of any AI system. Organizations must evaluate data availability, accuracy, consistency, and accessibility before building models to avoid unreliable results.

Which industries benefit most from AI implementation planning?

Industries with large datasets and complex decision processes benefit the most. These include finance, healthcare, manufacturing, retail, logistics, and telecommunications.

How do companies measure the success of AI implementation?

Success is typically measured through technical metrics such as model accuracy and system performance, as well as business metrics like cost savings, revenue growth, and operational efficiency.

Can small and medium businesses adopt AI adoption plan?

Yes. Small and medium businesses can adopt AI gradually by starting with focused use cases, improving data management practices, and scaling AI initiatives as capabilities grow.

What is the difference between AI strategy and AI implementation planning?

AI strategy defines long-term vision and goals for AI adoption. AI implementation planning focuses on the practical steps required to deploy AI systems, including technology choices, infrastructure preparation, and operational processes.

Rate this article

0

over 5.0 based on 0 reviews

Your rating on this news:

Name

*Email

*Write your comment

*Send your comment

1